The strongest B2B AI money trend right now is not another agent launch. It is the quiet transfer of AI infrastructure cost from technology providers to business customers.

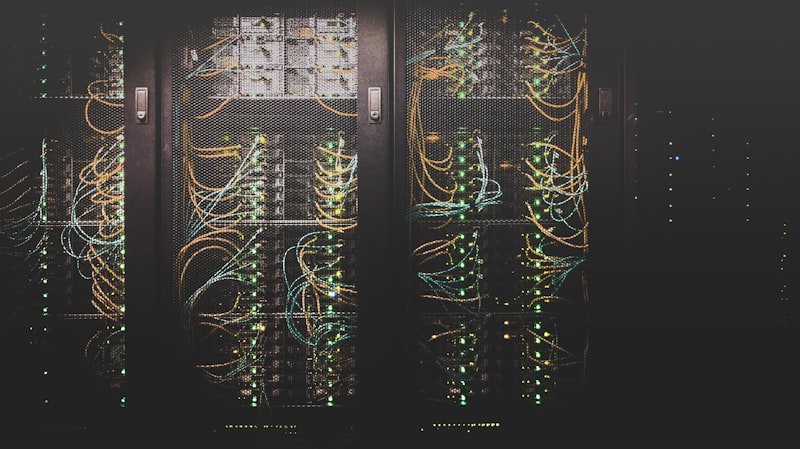

Over the last few days, the signal became hard to miss. The Guardian reported on May 5 that Google, Microsoft, Amazon, and Meta showed the same financial pattern in recent earnings: AI demand is pushing capital expenditure higher, especially through data centers and memory chips. Google, Microsoft, and Meta raised capex expectations by tens of billions. Amazon's projected spending was described around $200 billion for the year. Microsoft said component costs were running $25 billion above what it had expected, driven partly by memory price increases. Samsung warned that memory supply remains far below customer demand, and data centers are expected to consume about 70% of memory chips produced in 2026.

Axios framed the same pressure from the investor side. The biggest technology companies are on track to spend about $700 billion on AI this year, according to Goldman Sachs estimates cited by Axios, with the broader bill potentially reaching more than $1 trillion by next year. The question investors want answered is simple: is the monetization engine firing fast enough to justify that spend?

For enterprise buyers, that question lands in procurement. Every vendor selling AI into the business now has a cost story. Some will charge directly for usage. Some will bundle AI into a higher platform tier. Some will raise renewal prices across the board. Some will limit generous AI features after adoption. Some will route customers into premium cloud, data, security, or governance packages that quietly carry the margin burden.

This is why AI cost pass-through belongs in the B2B section. The real commercial battle is no longer whether AI tools are useful. It is who pays for the compute curve when AI becomes a normal part of sales, support, engineering, finance, HR, analytics, and operations.

Businesses that keep buying AI as if it were ordinary SaaS will overpay. Businesses that treat AI pricing as a measurable operating input can negotiate better contracts, avoid waste, and protect the ROI promised in Enterprise AI Adoption.

AI Is Changing the Shape of Software Margins

Classic SaaS margins were built on a beautiful idea: once the product exists, each additional customer is relatively cheap to serve. There are support costs, cloud costs, sales costs, and product costs, but software can scale with high gross margins because usage does not usually create a massive variable cost for every request.

AI weakens that assumption.

A normal CRM field update is cheap. An AI-generated sales summary, account research brief, call transcript, lead score, email draft, and next-best-action recommendation is not free. A normal support ticket search is cheap. A voice agent that listens, reasons, retrieves policy data, generates a response, and logs the case carries model, memory, storage, latency, and monitoring costs. A normal analytics dashboard is cheap to refresh on a schedule. A natural-language analyst that repeatedly queries messy business data can become expensive if usage is not governed.

That does not mean AI software is a bad business. It means the unit economics are different. The vendor has to recover inference cost, infrastructure commitments, model provider fees, specialized engineering, safety review, support, compliance, and the capital burden of making AI feel instant. If the vendor owns the whole stack, it still has to pay for chips, data centers, power, and memory. If it rents model access, it pays another provider and has to preserve its own margin on top.

The cost does not disappear. It moves.

That is the first thing B2B buyers need to understand. When a vendor says AI is included, the buyer should ask included how, for how long, under what usage limits, and at what renewal risk. "Included" often means the vendor is subsidizing adoption until it has better usage data. Once the customer workflow depends on the AI feature, pricing power shifts back to the vendor.

That is exactly why the current infrastructure spending news matters. The AI stack is not floating above the economy. It is buying scarce memory, filling data centers, consuming power, and forcing cloud providers to spend at a speed that demands monetization. Eventually, that pressure reaches the invoice.

The Incumbent Advantage Is Also a Pricing Advantage

Gartner's January forecast put worldwide AI spending at $2.52 trillion in 2026, up 44% year over year. The more important line for B2B buyers was not only the spending number. Gartner also argued that because AI remains in the 2026 trough of disillusionment, it will most often be sold to enterprises by incumbent software providers rather than bought as a separate moonshot project.

That is a huge pricing signal.

Incumbents already control the system of record, the admin console, the procurement relationship, the security review, the user identity layer, and the renewal calendar. If a company already runs its sales team on one CRM, its employee data in one HR platform, its finance workflows in one ERP, and its documents in one productivity suite, the easiest AI purchase is often the vendor's native add-on.

That creates a rational buying path. Native AI is usually easier to approve than a new standalone vendor. It can inherit permissions, data context, compliance language, and workflow placement. For a CIO under pressure to reduce tool sprawl, this is attractive.

But that convenience has a price. The incumbent can bundle AI into a more expensive edition, attach usage-based credits, restrict advanced features to enterprise tiers, or make AI governance a paid prerequisite. The buyer may think it is buying productivity. The vendor may be using AI to repackage the account around a higher average contract value.

This connects directly to the pattern in AI Cloud Capacity Crunch. When cloud and software providers face capacity constraints, the most profitable customers get priority. Enterprise AI pricing becomes a way to ration capacity, protect margins, and identify customers willing to pay for production-grade usage.

The danger for buyers is not that vendors charge for AI. They should. The danger is paying for vague AI access without understanding the cost driver, usage pattern, or business outcome.

The New Enterprise AI Invoice

The old software invoice was mostly seats, editions, support, and maybe storage. The new AI invoice is more complicated.

A buyer may now see seat pricing for employees who can access AI features, usage pricing for tokens or actions, workflow pricing for automated processes, data pricing for indexing and retrieval, governance pricing for audit logs and admin controls, and premium support pricing for production reliability. In some cases, the line item will not say "AI" at all. It will appear as a platform uplift, cloud consumption increase, API credit package, data processing tier, or enterprise enablement fee.

That matters because different pricing units create different behavior.

Seat-based AI pricing is simple, but it can hide low adoption. If 500 employees have access and only 80 use the feature weekly, the effective cost per active user is much higher than the contract suggests. Usage-based pricing is fairer when adoption is uneven, but it can punish teams that finally find a valuable workflow and scale it. Workflow pricing can align better with outcomes, but only if the workflow is clearly defined and measurable. Platform uplift pricing can be convenient, but it can also make the buyer pay for AI whether or not the business uses it.

The right pricing model depends on the workflow. A sales team using AI to summarize calls and draft follow-ups needs a different contract shape than a support organization using AI to deflect tickets. A finance team using AI for variance commentary needs a different usage ceiling than an engineering team using coding agents. A legal team using AI for document review needs stronger audit terms than a marketing team using AI for campaign drafts.

This is why AI procurement can no longer be handled as a generic renewal conversation. It has to be tied to the operating model.

The buyer should know which workflows use AI, which cost unit drives the invoice, which usage is high-value, which usage is noise, and which vendor has the right to change limits after the first term. Without that map, AI turns into a budget leak.

The CFO Question: What Are We Paying Per Outcome?

The practical answer is not to block AI spending. That would be lazy finance. The better answer is to translate AI cost into business outcomes before the renewal.

For customer support, the question is not how many AI conversations the vendor allows. It is cost per resolved issue, escalation rate, customer satisfaction, refund leakage, and quality control. If the AI feature reduces human handling time but increases rework, the invoice is lying. If it reduces handle time and preserves quality, the vendor deserves to be paid.

For sales, the question is not how many AI summaries are generated. It is whether speed-to-lead improves, whether reps spend more time selling, whether CRM hygiene improves, whether follow-up quality changes, and whether pipeline conversion moves. This is the same discipline behind AI CRM Automation ROI for SMBs. The value is not the generated text. The value is cleaner revenue execution.

For engineering, the question is not how many code completions happen. It is cycle time, review quality, defect rate, onboarding speed, and developer focus. For finance, the question is close speed, exception detection, forecast accuracy, and analyst leverage. For HR, it is time-to-screen, candidate quality, compliance, and manager workload.

AI cost pass-through becomes acceptable when it is attached to a measurable operating improvement. It becomes margin extraction when it is attached to vague productivity language and a forced platform upgrade.

This is the procurement line B2B teams need to hold.

Why Memory Prices Matter to a Software Buyer

At first glance, memory chips sound like a hardware supply-chain issue, not a software purchasing issue. In 2026, that separation is false.

AI software depends on infrastructure that is currently expensive and scarce. If data centers absorb the majority of available memory supply, the cost of serving AI workloads rises across the stack. Cloud providers spend more on hardware. Model providers spend more on capacity. SaaS vendors either pay higher infrastructure bills directly or pay more to the model providers they depend on. Customers then see price pressure through usage limits, add-on fees, higher tiers, and stricter contract terms.

The Guardian's reporting makes the pass-through chain visible. Rising memory demand is not only affecting AI data centers. It is already pressuring device makers and component suppliers. Texas Instruments raised prices on some components by 15% to 85% at the start of April, according to the same report, while shifting attention toward higher-margin data-center demand. Samsung and SK Hynix are benefiting from the shortage because AI buyers are willing to pay.

B2B software buyers do not need to become semiconductor analysts. But they do need to understand that the AI feature in a SaaS product is exposed to the same physical supply chain. When a vendor says its AI tier is expensive because compute is expensive, that may be true. The buyer's job is to separate legitimate cost recovery from opportunistic bundling.

That means asking sharper questions:

What usage is included in the base price? What happens if usage doubles? Are unused AI credits carried forward or lost? Can departments be capped separately? Are training, retrieval, storage, and inference priced separately? Does the vendor use customer data to improve shared models? What audit logs are included? What happens if the vendor changes model providers? Can the customer downgrade AI features without losing core platform access?

Those questions are not legal trivia. They determine whether AI spend remains controllable after adoption.

The Portfolio Deployment Risk

Another recent signal points in the same direction. PYMNTS covered the May 4 moves by Amazon, Anthropic, and OpenAI as a shift toward portfolio-level AI deployment, where technology is distributed across entire networks of companies rather than sold one enterprise at a time.

That model can accelerate adoption. If a private-equity firm, cloud provider, or AI lab can push a deployment pattern across dozens or hundreds of companies, implementation gets faster and vendors gain distribution. It may also improve standardization, because repeated deployments create reusable playbooks.

But it creates a pricing risk for operating companies.

When AI is introduced through a portfolio-level program, the buyer may inherit preferred vendors, standard packages, shared implementation partners, and benchmarked pricing. That can be efficient. It can also reduce competitive tension if the operating company treats the program as already decided. A portfolio company still needs to ask whether the package fits its workflows, whether the economics are visible, and whether the AI vendor is being selected for business fit or ecosystem convenience.

This is where B2B leaders can learn from Enterprise AI Deployment Startups. Deployment matters, but deployment without measurement becomes an expensive services story. The same is true for AI pricing. A portfolio rollout should come with a cost model, adoption targets, usage governance, and renewal discipline.

Otherwise, portfolio-level AI becomes portfolio-level margin leakage.

The New Pricing Playbook for B2B Buyers

The first move is to build an AI usage inventory before renewal season. List every vendor with AI features, every department using them, the pricing unit, the contract limits, the current adoption level, and the business metric the feature is supposed to improve. This does not need to be perfect. It needs to be good enough to reveal where money is going.

The second move is to separate experimentation from production. Experimental AI should be capped, cheap, and reversible. Production AI should be governed, measured, supported, and tied to a clear owner. The worst contracts mix the two: broad access for vague experimentation at production-grade prices.

The third move is to negotiate usage bands rather than unlimited optimism. If a workflow is expected to grow, the buyer should know the next price step before adoption expands. A good contract makes success affordable. A bad contract punishes success by turning real usage into surprise overage.

The fourth move is to demand observability. If a vendor charges for AI, it should provide usage dashboards by team, workflow, user, and feature. Without that visibility, the buyer cannot manage behavior. AI procurement without usage data is just trust-based spending.

The fifth move is to preserve exit rights. AI features should not become a trapdoor that locks the customer into a higher platform tier forever. If the AI layer fails to deliver value, the customer should be able to remove it without disrupting the core system of record.

The sixth move is to compare native AI with focused workflow vendors. Incumbents will win many deals because they are convenient. They should not win automatically. A focused vendor may deliver better economics for a narrow workflow, especially when the buyer can measure the outcome tightly.

The Bottom Line

AI cost pass-through is not a future issue. It is already forming in cloud earnings, chip shortages, SaaS packaging, and enterprise deployment models.

The good news is that this makes AI buying more rational. The market is moving away from novelty and toward cost, capacity, margin, and measurable output. That is healthier for serious businesses. It rewards vendors that can prove value and buyers that know what they are buying.

The bad news is that vague AI enthusiasm is about to get expensive. If a company cannot explain which AI workflows produce value, it will struggle to tell the difference between a fair price increase and a margin grab.

The winners in B2B AI will not be the teams that buy the most tools. They will be the teams that understand the unit economics of the workflows they automate.

AI may still be sold as intelligence. In enterprise software, it is increasingly priced as infrastructure.

Sources

Tools for action

Turn this insight into execution

Use the calculator, stack selector, and playbooks to estimate value and launch faster.